Experimental validation

For AI predictions of complex biological phenomena, experimental validation is the ultimate proof of correctness. But experimental validation is itself subject to myriad factors and considerations that can lead to spurious outcomes if not carefully addressed.

Computational experts often fall into great debates over causality. While we deeply appreciate the debate, as builders of predictive AI tools to model complex biological phenomena, our perspective is that for our situation, experimental validation is the only proof that matters. The how of the computational prediction doesn’t matter all that much as long as the derived experimental results are consistently valid and reproducible. How many people understand how Google search actually works? Maybe a few engineers at Google. But we all intuitively experience that the platform provides us valid answers every time we submit a query.

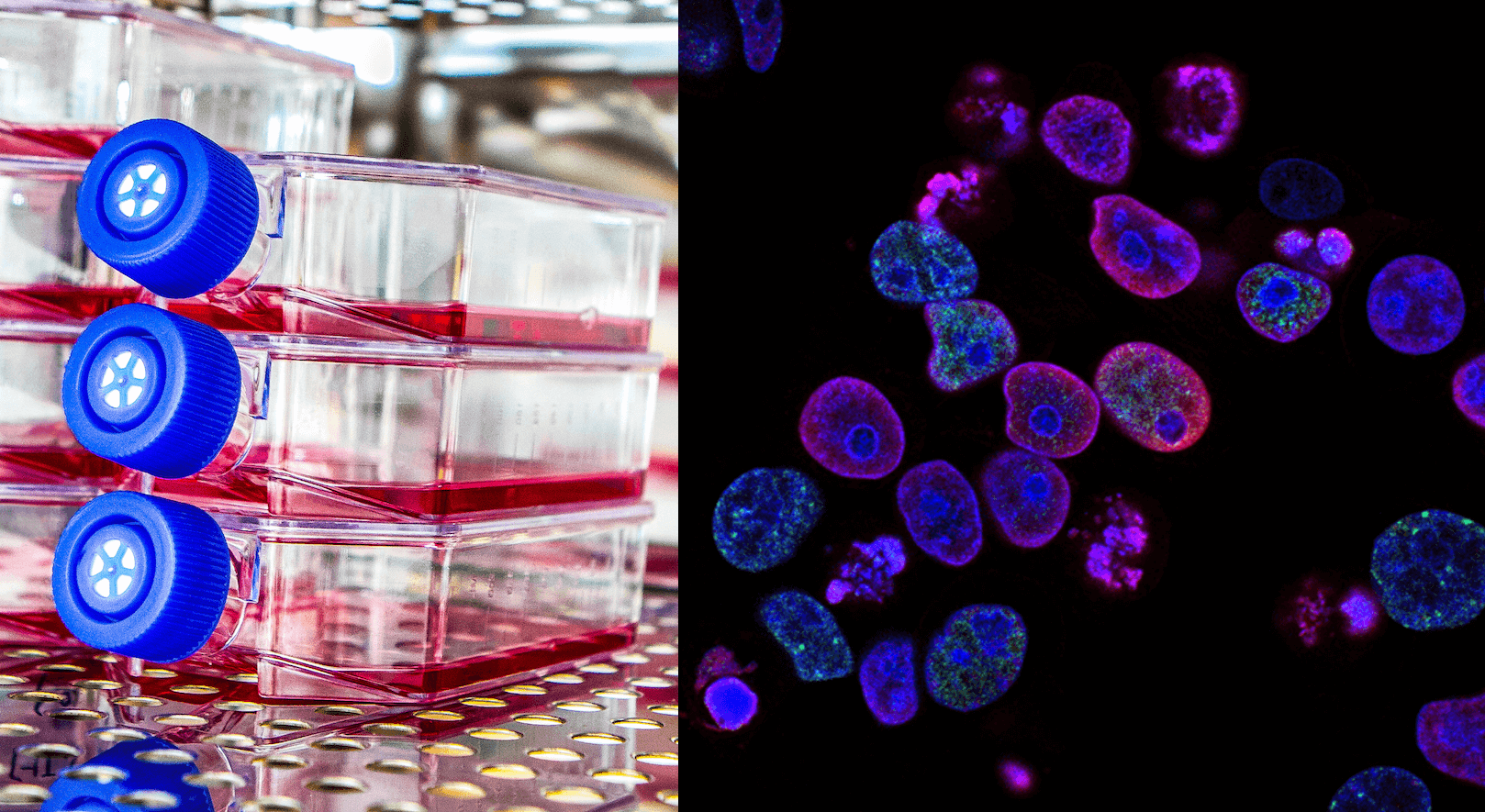

Experimental validation is therefore a critical, inescapable step before a drug program can move forward. Put differently, we employ our computational platforms to shrink a biological problem space immensely and catapult ourselves to an answer. But that answer still needs to be confirmed in the cell and, if it passes that step and others, then in the organism.

Retrospective vs prospective experimental validation

It is worth pointing out that experimental validation can be retrospective or prospective. We focus this post on prospective experimental validation with particular emphasis on the biological considerations and experiments in of themselves rather than as a referendum on the computational prediction. But as a matter of course, building performant AI systems require considerable retrospective experimental validations along the way, which can lend confidence in the system’s ability for predictions that will also pass prospective validation.

At its best, the boost provided by the computational prediction should be to such an extent that the experimental confirmation can be carried out with focused resources in a routine operation (compared to vast teams of researchers and robots in a luck-based high throughput screening system). But there are still hard problems to address to reach that optimum while ensuring that the results are predictive of a curative effect in human. Yes, it’s all still just informed predictions even at the experimental stage until the drug is shown safe enough to be tested for efficacy in real patients. Early experimental validation is simply adding more backing and definition to the informed prediction provided by the AI.

For example, the AI answers questions like, What’s the right target to go after to cure this disease and What’s the best drug to modulate the target? Then early experimental validation confirms the AI’s answers and provides additional colors to questions such as, Will the target translate in the clinic? Is the candidate drug interacting with unintended targets? Is there unforeseen toxicity? Should the drug be improved further or is it working well enough already?

Depending on whether the validation is for a target or for a drug molecule, there are myriad factors and considerations to carefully account for that if handled incorrectly can lead to spurious results and considerable time and resource loss. In a future post, we will look more closely at different factors that we consider to minimize spurious validation results. For now, we will discuss three of these factors in broad strokes:

1) The assay must be relevant to human biology

While a lot of biological functions are shared across species, there is also a lot of differences and situations where the same target, while apparently the same in say mice and human, actually have slightly different functions. So, in selecting a test system (whether a cell, a biophysical assay, or an organism), it is important to confirm that the target in that system replicates the same functions and state that it does endogenously in human.

As an example, cancer biologists have learned that testing an anti-cancer drug in mice with mice tumors leads to misleading results as the biology of the organism and that of the drug target, even if very similar to human, still carries enough differences to cause confounding. Instead, the mice that work better in testing anti-cancer drugs destined for human actually have human tumors grafted. Put differently, these mice are human xenografts, and they are better predictors of anti-cancer activity in human because the biology in the tumor and in the target reflects human biology.

There are even more complications. For instance, a disease like cancer can more easily have a human xenograft mice model but how does one create such xenograft mice for a disease of the brain? Likewise, shrinkage of a tumor can be easily measured, but the corresponding neuroscience disease phenotype cannot be as easily measured nor replicated in mice.

These are hard challenges to address in designing a testing system. But failing to account for the human biology no matter how hard it is achieve is an even bigger mistake to avoid.

2) The assay must be relevant to disease mechanism

Similar to ensuring that the testing system replicates human biology, it should also replicate the disease mechanism to avoid confounding results. For example, it would not make sense to heavily modify a target (e.g., remove a piece of it to have it have a desired behavior) but then draw definitive predictive conclusions as to the effectiveness of a drug candidate tested on that modified target because the etiology had been changed from the endogenous progression. These sorts of modifications or extra additions are often made as a compromise to overcome experimental or technical challenges. However, our own position is that they introduce spurious elements and every effort should be made to avoid them and instead use testing systems that replicate the disease mechanism without modification as closely as possible.

Leaning further on the cancer mice xenograft example above, it’s been observed that using actual patient-derived tumor grafts can be even better predictive, driving home the message that not only the biology of the organism, but also the specific etiology of the disease matter.

3) The timepoints for intervention for endpoint measurements must be relevant to the disease outcomes

The choice of endpoint itself is very important, especially for a disease with a non-obvious endpoint. But the timepoint of intervention is a more subtle but particularly serious factor. Essentially, was the intervention applied before the phenotype had a chance to develop or was it applied after confirming that the phenotype had developed? The former would be analogous to testing for prophylactic effect in people who haven’t yet developed the disease while the latter would be testing for a cure in patients that already have the disease, i.e., the typical situation. These aren’t mutually exclusive necessarily, but they are different, and the nuance is important and can have adverse consequences on a project if chosen incorrectly.

These three factors are some of the most easily-made mistakes that we see permeate the entire field. These considerations are not taught in school, hard to recognize, and extremely difficult to account for due to experimental and technical constraints.

There are of course many other considerations beyond these three, including ensuring that the effects being measured is due to the drug and on-target, addressing toxicity, reproducibility, etc. which we’ll discuss in future posts.